Call for papers

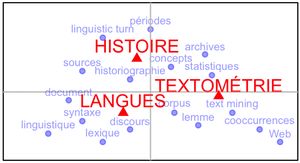

Computational methods of text analysis (lexicometry, computational linguistics, text mining, distant reading...) are undergoing important developments in many scientific fields and in society as a whole. Such methods can help and interest many different sectors (private companies, public governance, intelligence, data-journalism, etc.). They are also assuming growing importance in the humanities, especially among researchers of the digital humanities. This has led to a number of conferences and regular scientific events, such as the French JADT (Journées d’Analyse des Données textuelles), and to several recent synthesis books (Léon & Loiseau 2016, Jenset & McGillivray 2017).

In this movement, the position of historians appears to be paradoxical. Their work is largely based on texts used as sources and, following the evolutions of modern historiography, they showed a growing interest in the discourses and representations of societies and individuals of the past. In this regard, the methodologies of text analysis in history enjoyed a fair success and prominence in France as soon as the 1970s, especially at the Centre de lexicologie politique of the ENS Fontenay/Saint-Cloud. However, despite the influence of the linguistic turn and the development of more powerful and more accessible software, the use of text analysis in history have been less frequent lately, even though such methods continue to prove useful (Genet, 2011). The limited presence of historians at the JADT is symptomatic of that.

Nowadays, text analysis is regaining momentum thanks to text mining, which can help with sorting the massive amounts of textual data produced by the digitalization of sources (such as the project Corpus of the Bibliothèque nationale de France – Moiraghi 2018).

The aim of this conference is to understand the current uses of computational and statistical text analysis in history, at a time where the intellectual, social and technical context is changing. Several questions can be raised to better assess their use and their contribution to history.

1. The historiography of text analysis

For a long time, historians have thought about the way they can associate history, linguistics and statistics (Robin 1973 ; Guilhaumou, Maldidier, Robin 1994 ; Genet 2011 ; Léon 2015 ; Léon & Loiseau 2016), and this historiographical current is not closed. One can go back to the fruitful moments of this collaboration, such as the works of the center of political lexicology of the ENS Fontenay/Saint-Cloud, or those of the laboratory of statistical linguistics of the University of Nice. But it is worth asking why some scientific and intellectual enterprises that appeared promising at first did not eventually reach the same success, for example the works by Michel Pêcheux and Denise Maldidier. One can also consider the intellectual career of historians like Jacques Guilhaumou and Régine Robin, who began their research using lexical statistics before they turned their attention to methodologies closer to a more traditional conceptual history.

2. New methods for new corpora

2.1. The new sources and objects of text analysis

In France, text analysis was originally used to analyze political and trade unions texts. While this field of study is still active (Mayaffre 2010) and can even reach a general audience (Alduy 2017 ; Souchard, Wanich & Cuminal 1998), it is worth considering which other types of sources can be analyzed in this way. Some languages have strong idiosyncrasies: the writing of charters, diplomatic cables, or legal texts, for example. Others are characterized by their specific context of production (orality, private or intimate writings, literary texts, etc.). Which questions and approaches are relevant for this kind of material?

A language can also be treated as an historical object by itself, especially when it is a tool of empowerment or domination. This is what Serge Lusignan highlighted with a qualitative approach in his essays of sociolinguistic history (Lusignan 2004 and 2012). Similarly, the linguistic aspects of domination are central in gender history or in postcolonial studies. In this respect, what can text analysis offer? How can such methods assist in grasping those discursive phenomenons?

At the same time, a number of fields in history were deeply influenced by the archival turn (Clanchy 1979, and Chastang 2008 for example for the medieval history, or Guyotjeannin 1995). In this approach, sources are considered as an object per se, and a greater attention is given to their mode of production and their conditions of conservation, in order to better understand what they say. Then, is text analysis less relevant, or can it help us shed a new light on the document itself, its formal or material aspects, its genesis and its evolutions?

2.2. Text analysis, big data, and the historian

With the statistical approach of textual analysis, a representative and fairly large corpus is needed in order to produce significant results. The ideal size of such a corpus is an open question, but one can wonder how it is possible to study textual materials with different levels of magnification and complementary methods (data mining on big corpora vs. focused analysis of a specific lexicon, for example). Historians must reflect on this shift, now that the corpora of digitalized and born-digital sources (such as websites) are rapidly growing. How can they make those new materials their own, and what can they say, armed with their critical knowledge of sources, about their constitution and their use? Recent publications show this transformation can benefit historians of all periods (Perreaux 2014 in medieval history, for example) and that it redefines the geography of historical research (Putnam 2016).

3. The development of the statistical toolkit of text analysis

3.1. Temporality

Corpora with a diachronic structure raise specific issues. The problem of anachronism has long been tackled by historians working on such material (Prost 1988), while more recent works have focused on the visualization of temporality (Ratinaud & Marchand 2014). In textual analysis, words can speak for themselves and reveal a useful periodization for the historian’s work. Some statistical methods (Factorial analysis, topic modeling) can show the evolution of a lexicon, by highlighting words coming in and out of a corpus, but the changes in their meaning itself still are difficult to grasp. What are the means to perceive those semantic transformations? Also, how can we make use of discontinuous series of texts on a large timeframe? Such questions are important to the historian, who works on temporality by definition, but they are also especially relevant when one considers digital writings, in so far as they are frequently organized chronologically (such as Facebook or Twitter posts).

3.2. Innovative algorithms for text analysis

Since the seminal book by Lebart and Salem (Lebart & Salem 1994), a common set of statistical concepts and tools has been used by researchers and implemented in free softwares, but new methodologies offer innovative ways to analyze a corpus. In addition to topic models, a tool like Linkage uses written exchanges to build a classification of a social network, while some deep learning algorithms relying on word vectors (Embedding Layer, Word2Vec, GloVe) can provide a summary and a comparison of documents very quickly (Levy & Goldberg, 2014 or Barron et alii 2018). How can those new methods be used by historians?

3.3. Qualitative and quantitative approaches

The computer tools available to social scientists for linguistic investigations are not necessarily based on statistics. Softwares like Nooj make a precise formalization of natural languages possible, thus enriching the comprehension of a language in a given state and through time.

On a broader level, one must also acknowledge the role of qualitative approaches. Their association with quantitative methods is a fruitful one (Paveau 2012) and they must be taken into account to fully address the possible relationships between languages and history. Contributions illustrating and discussing the benefits of those different methods in history will be most welcome.

Bibliography

- Alduy C., Ce qu’ils disent vraiment : les politiques pris au mot, Paris, Éditions du Seuil, 2017.

- Barron A. T. J., Huang J., Spang R. L., DeDeo S., « Individuals, Institutions, and Innovation in the Debates of the French Revolution », Proceedings of the National Academy of Sciences, 2018.

- Bécue Bertaut M., Analyse textuelle avec R, Rennes, Presses universitaires de Rennes, 2018.

- Chastang P., « L’archéologie du texte médiéval. Autour de travaux récents sur l’écrit au Moyen Âge », Annales. Histoire, Sciences sociales, 2008, vol. 63, n° 2, pp. 245-269.

- Clanchy M. From memory to written record: England 1066-1307, London, E. Arnold, 1979.

- Genet J.-P., « Langue et histoire : des rapports nouveaux », in Bertrand J.-M., Boilley P, Genet J.-P. et Schmitt-Pantel P. (dirs.), Langue et histoire. Actes du colloque de l’école doctorale d’histoire de Paris 1. INHA, 20 et 21 octobre 2006, Paris, Publications de la Sorbonne, 2011.

- Guilhaumou J., Maldidier D. et Robin R., Discours et archive : expérimentations en analyse du discours, Liege, Mardaga, 1994.

- Guyotjeannin O., « L’érudition transfigurée », in Boutier J., Julia D. (dir.), Passés recomposés. Champs et chantiers de l’Histoire, Paris, Autrement, 1995, pp. 152-162. (coll. « Mutations »).

- Jenset G. B. et McGillivray B. (dirs.), Quantitative Historical Linguistics: A Corpus Framework, Oxford, New York, Oxford University Press, 2017.

- Lebart L. et Salem A., Statistique textuelle, Paris, Dunod, 1994.

- Léon J., Histoire de l’automatisation des sciences du langage, Lyon, ENS Éditions, 2015.

- Léon J. et Loiseau S. (dirs.), « Studies in Quantitative Linguistics » n°24, History of Quantitative Linguistics in France, Lüdenscheid, RAM-Verlag, 2016. (Studies of Quantitative Linguistics, n° 24).

- Levy O. et Goldberg Y., « Dependency-Based Word Embeddings », Proceedings of the 52nd Annual Meeting of the Association for Computational Linguistics (Short Papers), Association for Computational Linguistics, 2014, p. 302-308.

- Lusignan S., La langue des rois du Moyen âge : le français en France et en Angleterre, Paris, PUF, 2004.

- Lusignan S., Essai d’histoire sociolinguistique : le français picard au Moyen Âge, Paris, Classique Garnier, 2012.

- Mayaffre D., Vers une herméneutique matérielle numérique. Corpus textuels, Logométrie et Langage politique, Habilitation à diriger des recherches, Université Nice Sophia Antipolis, 2010.

- Moiraghi E., Le projet Corpus et ses publics potentiels. Une étude prospective sur les besoins et les attentes des futurs usagers, janvier 2018.

- Paveau M.-A., « L’alternative quantitatif/qualitatif à l’épreuve des univers discursifs numériques », conférence plénière au colloque Complémentarité des approches quantitatives et qualitatives dans l’analyse des discours ?, Amiens, Université de Picardie, CURAPP, 10‐11 mai (présentation : http://penseedudiscours.hypotheses.org/9711)

- Perreaux N., L'écriture du monde. Dynamique, perception, catégorisation du mundus au Moyen-âge (VIIème - XIIIème siècles). Recherches à partir de bases de données numérisées, thèse de doctorat, Université de Bourgogne, 2014.

- Prost A., « Les mots », in René Rémond (dir.), Pour une histoire politique, Paris, Éditions du Seuil, 1988, pp. 255‑258.

- Ratinaud P. et Marchand P., « Des mondes lexicaux aux représentations sociales. Une première approche des thématiques dans les débats à l’Assemblée nationale (1998-2014) », Mots. Les langages du politique, 2015, vol. 2, no 108, pp. 57‑77.

- Robin R., Histoire et linguistique, Paris, Armand Colin, 1973.

- Salem A., « Approches du temps lexical », Mots, 1988, vol. 17, no 1, pp. 105‑143.

- Souchard M., Wanich S. et Cuminal I., Le Pen, les mots : analyse d’un discours d’extrême-droite, Paris, La Découverte, Le Monde Édition, 1998.